Mobile World Congress 2026. Physical AI on the Rise

Photo: © Ulrich Buckenlei | Visoric GmbH

The Mobile World Congress 2026 in Barcelona has concluded, yet the signals from the event extend far beyond the exhibition halls. This year did not reveal an ordinary product cycle, but a new industrial context. Artificial intelligence is moving closer to networks, machines, and operational processes. Physical AI is taking on clearer form. Extended Reality is evolving from pure visualization into a productive interface. And digital infrastructures are beginning not only to connect, but to capture situations, evaluate them, and respond immediately.

This is precisely the real message of the Mobile World Congress 2026. The time when connectivity was understood primarily as a transport layer is coming to an end. Networks, data spaces, models, and devices are converging into systems that support decisions or can trigger them autonomously in clearly defined contexts. This creates a new technological foundation for industry, logistics, energy, healthcare, and services. Competitiveness increasingly depends on whether information is merely collected or transformed into actionable intelligence in real time.

Ulrich Buckenlei is an industry analyst for AI and Extended Reality. He reported directly from the Mobile World Congress in Barcelona.

From Connectivity Network to Decision Infrastructure

For many years, the architecture of digital systems was clearly distributed. Networks transported data. Central platforms stored and processed it. Applications accessed results with a time delay. This principle was efficient as long as response times could remain moderate and processes did not have to react at high frequency to changing situations.

At the Mobile World Congress 2026, it became clear how strongly this logic is now changing. Networks are increasingly becoming active system layers. They detect load conditions, prioritize data flows, support local computing processes, and form the basis for AI driven automation. This shifts the focus from pure transmission toward operational intelligence. What matters is no longer only whether data arrives quickly, but whether it is translated into usable action at the right moment and in the right place.

This development fundamentally changes the understanding of infrastructure. Computing power, contextual knowledge, and system logic are moving closer to the places where processes actually happen. Especially in industrial applications, in connected machines, or in safety critical workflows, every millisecond counts. In such contexts, the real value does not come from centralized collection, but from local responsiveness.

- Networks are becoming active layers of system control

- Relevant decisions are moving closer to real processes

- Connectivity is turning into operational decision capability

AI infrastructure as the foundation of intelligent network and system architectures at the Mobile World Congress 2026 in Barcelona

Photo: © Ulrich Buckenlei | Visoric GmbH

Many exhibition stands made exactly this shift visible. What was shown were not isolated computing modules, but complete infrastructures in which chips, network technology, cooling, data management, and AI models are conceived as a coordinated overall system. It was especially striking that data centers, telecommunications, and industrial platforms are moving ever closer together thematically.

For companies, this creates a new task. They must no longer evaluate infrastructure only by capacity, but by its ability to embed decisions into ongoing processes. Whoever masters this layer is not simply building a faster system. They are creating the basis for intelligent workflows, adaptive services, and more resilient operating models.

Physical AI Leaves the Lab and Becomes an Industrial Topic

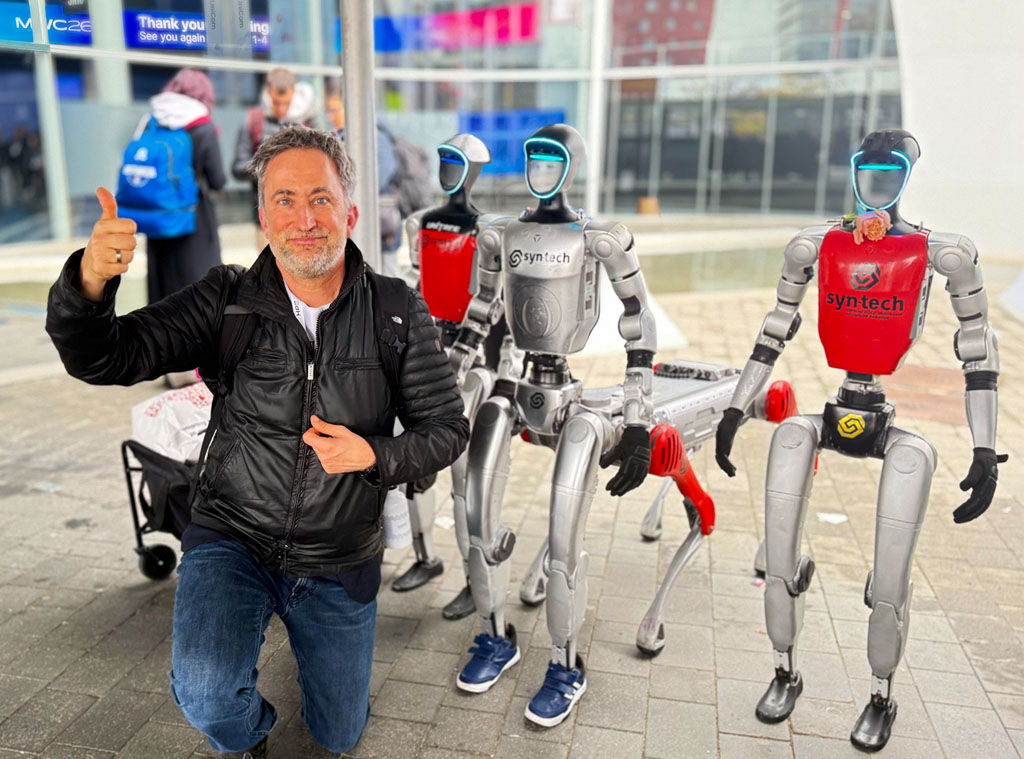

A second strong signal from the event was the visible rise of Physical AI. This refers to systems that do not merely compute their environment, but can perceive it, interpret it, and respond to it in physical form. This fundamentally changes the role of artificial intelligence. It is no longer limited to analytical interfaces or background processes, but appears as a technology capable of action.

This shift is highly relevant for many industries. As soon as AI is embedded in robotics, autonomous devices, mobile platforms, or assistance systems, a new type of technical application emerges. Perception, reasoning, and execution then interact directly. Machines recognize objects, assess situations, adapt movements, and take over tasks that previously required rigid programming or constant human supervision.

The Mobile World Congress 2026 showed that this topic no longer needs to be staged as something futuristic. Humanoid robots, precise motion sequences, and interactive demonstrations did not look like a distant vision of the future, but rather like signs of the beginning of operational scaling. What was interesting was less the spectacle of individual demos than the underlying systems question. Which sensors, which models, which computing pathways, and which control layers are necessary for machines to act reliably in open environments.

- Physical AI connects perception, reasoning, and action

- Robots are evolving from fixed routines into learning systems

- Operational value emerges where AI takes over real tasks

Humanoid robot in a live demonstration at the Mobile World Congress 2026

Photo: © Ulrich Buckenlei | Visoric GmbH

Such systems mark a profound change for companies. Artificial intelligence is no longer understood only as a software building block in the backend. It becomes part of value creation itself. Wherever machines inspect, sort, assist, transport, or support, the boundary between digital model and physical process begins to shift.

This also explains why the term Physical AI gained such strong presence at the event. It stands for the next stage of development after generative and agentic AI. The question is no longer only what a model can say or plan. The question is how intelligence can be translated into real environments without losing safety, precision, or traceability.

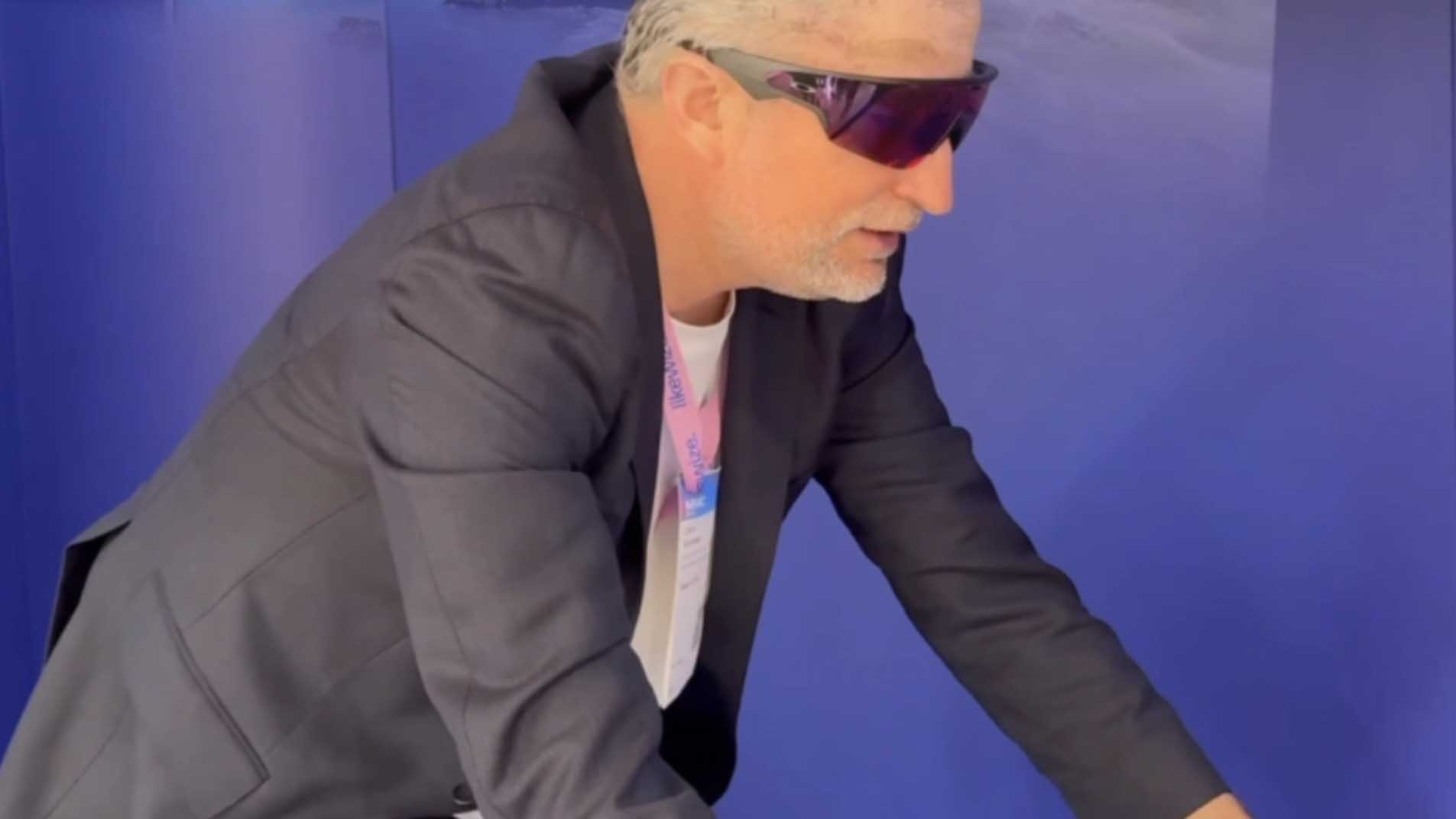

Wearable AI in Motion. When Smart Glasses Become a Real Time Interface

One particularly insightful impression of the Mobile World Congress 2026 emerges where artificial intelligence no longer appears as an abstract platform, but becomes directly experienceable on the body. This is exactly what could be seen in the cycling simulation at the Meter stand. Instead of a classic screen or a stationary control panel, a new form of wearable interface moved into the spotlight. Interaction happened through movement, perspective, language, and immediate perception within the context of use.

Such scenarios are technologically interesting because they bring together several lines of development. Smart glasses are evolving into compact interfaces that combine visual capture, acoustic feedback, and context related AI functions in a wearable format. In combination with a physical activity such as a cycling simulation, this creates a system that not only captures information, but also makes it interpretable in context. The interface thus shifts from the stationary device to the acting human being.

This is precisely where the scientific relevance of such demonstrations lies. In research, smart glasses, wearables, and real time feedback systems have for years been seen as a central field for sport, rehabilitation, and mobile assistance. The added value emerges where data is not only collected, but embedded into ongoing movement processes. Training, orientation, correction, and evaluation can thus move closer to the moment of action.

- Wearable AI connects perception, context, and action within a usage situation

- Real time feedback moves closer to movement, workload, and spatial orientation

- The interface shifts from the screen to a body centered interface

Cycling simulation with Oakley Meta Vanguard AI Glasses at the Meter stand at the Mobile World Congress 2026

Photo: © Ulrich Buckenlei | Visoric GmbH

The image stands as an example of an important shift. AI is no longer staged here as an invisible background service, but as a body centered tool for situational assistance. Glasses of this class can capture the user’s perspective, deliver audio, and connect to digital functions through voice control. This creates a new human machine relationship that is especially relevant for mobile environments. The focus is not on later analysis, but on the direct coupling of perception and reaction.

This is especially exciting for training and simulation applications. In sports science and in research on biomechanical real time feedback, it has been clear for years that immediate feedback can significantly influence the quality of movement learning, correction, and motivation. Wearables and sensor based systems are therefore increasingly used not only for measurement, but as active support within the execution of action. AI glasses expand this principle by adding perspective data, voice based interaction, and a hands free form of assistance.

At the same time, the scene points to a broader industrial topic. What appears vividly in the cycling simulator is also relevant for maintenance, training, field service, and operational assistance. Wherever people move, make decisions, and need both hands for real tasks, wearable AI interfaces gain strategic importance. They can provide context aware information, document workflows, and make interaction with digital systems more natural.

The Mobile World Congress 2026 thus makes visible that the next phase of intelligent systems will not emerge only in data centers or network infrastructures. It is also emerging in body centered interfaces that integrate perception, communication, and assistance directly into real action situations. Smart glasses mark an important development step at exactly this point. They are not a replacement for comprehensive XR systems, but they show very precisely how AI is moving out of the backend and into everyday life and operational spaces of movement.

XR Becomes the Productive Surface of Complex Systems

As technical depth increases, so does the need for understandable interfaces. This is exactly where Extended Reality gains new strategic relevance at the Mobile World Congress 2026. XR is no longer only a format for demonstration, marketing, or experience design. It is becoming increasingly visible that spatial interfaces can take on a productive role in planning, training, maintenance, and collaboration.

The decisive advantage lies in the spatial translation of complex information. States, processes, models, and relationships are not only displayed on flat surfaces, but placed into a context that can be grasped immediately. Anyone working with machines, digital twins, or process chains gains a different access to complexity. Decisions do not become simpler, but they are prepared more clearly.

This is particularly relevant in industrial environments. Technical systems rarely consist of individual variables. They are spatial, dynamic, and highly interdependent. XR makes it possible to make exactly these relationships visible. Maintenance steps can be shown in context. Models can be discussed directly on the object. Simulations are not only viewed, but experienced and evaluated.

- XR translates abstract data into spatially understandable work surfaces

- Digital twins become more intuitive for teams to use

- Humans and AI come together in a shared space of action

XR hands on at the Mobile World Congress 2026 with a view toward the next device category in spatial computing

Photo: © Ulrich Buckenlei | Visoric GmbH

What was particularly interesting in Barcelona was that another XR ecosystem is visibly taking shape. Alongside existing platforms, new devices and operating systems are moving more strongly into focus. This makes it clear that spatial computing will not remain limited to a single manufacturer or a consumer context. For companies, what matters above all is that XR can be integrated as a workable interface into existing software and process landscapes.

That is exactly why XR at the Mobile World Congress 2026 is not just a side topic, but part of the broader infrastructure question. When AI prepares decisions, networks coordinate data flows, and digital twins model processes, an interface is needed through which people can understand and control these systems. XR is beginning to take on exactly this role.

The New Industrial Architecture Behind the Exhibition Image

Anyone who thinks through the many topics of the Mobile World Congress 2026 together can recognize an overarching structure. It is not about individual innovations standing next to each other. It is about the convergence of several technological layers into a new industrial architecture. Artificial intelligence is the connecting element here, but not as an isolated module. It operates simultaneously in networks, platforms, machines, simulation spaces, and user interfaces.

This architecture can be described as a transition from digital connectivity to industrial intelligence. AI native network logics create the foundation for adaptive communication. Computing and model infrastructures provide operational intelligence. Physical AI anchors these capabilities in real systems. Digital twins enable simulation, validation, and continuous improvement. XR finally creates the layer through which people can intervene in this complexity and work with it.

What matters is that all these layers interact. An intelligent network without application oriented models remains limited. A robot without a simulation space is harder to scale. A digital twin without an understandable interface remains expert knowledge. Only in combination does what companies will truly need in the future emerge. A system that does not merely move data, but translates it into perception, prediction, optimization, and action.

- AI native network logics connect communication and control

- Physical AI brings intelligence into real work processes

- Digital twins create safe spaces for training and optimization

- XR makes complex systems operationally accessible for people

- Competitive advantages arise from the interplay of these layers

Industrial Intelligence Architecture as a condensed image of technological convergence at the Mobile World Congress 2026

Visualization: XR Stager Research | Visoric GmbH

This gives the Mobile World Congress 2026 a clear emphasis. The future does not belong to the loudest individual innovations, but to the companies that form resilient overall systems out of networks, AI, simulation, and spatial interfaces. Those who understand this architecture early gain not only technological orientation, but also strategic speed.

Physical AI stands as an example of the character of this transformation. It makes visible that intelligence is leaving the screen and entering operational reality. That is exactly why the question of infrastructure, data model, governance, and interface will become more important in the coming years than the mere question of the next device or the next model name.

Video Impressions from the Mobile World Congress 2026

The Mobile World Congress in Barcelona remains one of the most important places to observe technological shifts at full scale. Telecommunications, semiconductors, platform strategies, industrial applications, robotics, and immersive systems meet here directly. What often appears abstract in strategy papers becomes tangible at the exhibition as a visible systems landscape.

This concentration was particularly evident in 2026. Network intelligence, Physical AI, new XR platforms, automated operating models, and simulation environments did not appear as separate fields of discussion, but as tightly connected elements of a new infrastructure. For analysts, technology leaders, and business decision makers, this is exactly what makes such events valuable. Trends can not only be named, but also assessed in terms of their practical maturity.

The following video shows personal impressions directly from the exhibition grounds in Barcelona. It conveys atmosphere, dynamism, and the international range of the developments on display.

Impressions from the Mobile World Congress 2026 in Barcelona

Video footage: © Ulrich Buckenlei | XR Stager Newsroom

The footage shows how strongly the technological narrative has shifted. The focus is no longer only on faster devices or new form factors. The central question is how systems become infrastructures capable of perception, learning, and action.

This is precisely the real message of this year’s Mobile World Congress. The event does not mark a selective technological leap, but a phase of consolidation. Connectivity becomes intelligence. Visualization becomes operational interaction. Digital models become productive spaces for decision making.

From the Exhibition Image to a Robust Corporate Strategy

The Mobile World Congress 2026 sends a clear signal to companies. Artificial intelligence is becoming infrastructure. Physical AI expands the operational reach of digital systems. XR is evolving into a productive interface. And digital twins create the spaces in which new applications can be safely prepared, tested, and optimized.

The central management question is therefore no longer whether these technologies will become relevant. Their relevance is already visible. The decisive question is which combination of these building blocks makes sense for a company’s own business model, in what sequence they should be built, and which organizational prerequisites are required. Many initiatives fail not because of missing technology, but because of insufficient classification, overstretched pilot visions, or an architecture that does not align professionally with operational reality.

This is exactly the point where the Visoric expert team in Munich works. We support companies in analytically classifying technological signals from formats such as the Mobile World Congress and translating them into robust next steps. This ranges from trend evaluation and the formulation of realistic use cases to the development of a target architecture for AI, XR, digital twins, and connected infrastructures.

The Visoric expert team from Munich supports companies from market observation to an actionable transformation roadmap

Source: VISORIC GmbH | Munich

- MWC Analysis → Classification of relevant technologies for industry, value creation, and organizational model

- Strategic Evaluation → Examination of the role AI, XR, Physical AI, and digital twins can play in the company

- Use Case Development → Selection of realistic applications with visible operational benefit

- Target Architecture → Design of coherent systems across network, data space, model logic, and interface

- Pilot Validation → Early assessment of technical feasibility and economic viability

- Enablement → Workshops, management briefings, and decision foundations for the next steps

If you do not want to merely observe the signals of the Mobile World Congress 2026, but translate them into concrete courses of action, a structured conversation is worthwhile. Together, we clarify which technological building blocks are truly relevant for your company and how an actionable roadmap can emerge from them.

Contact Us:

Email: info@xrstager.com

Phone: +49 89 21552678

Contact Persons:

Ulrich Buckenlei (Creative Director)

Mobil +49 152 53532871

Mail: ulrich.buckenlei@xrstager.com

Nataliya Daniltseva (Projekt Manager)

Mobil + 49 176 72805705

Mail: nataliya.daniltseva@xrstager.com

Address:

VISORIC GmbH

Bayerstraße 13

D-80335 Munich